You may wonder, is LocalAI good enough? Do we understand it? Can it be used to do something good?

Short answer: Yes.

First of all, how it does look like?

In the video below you can see how it looks like form my home setup. A workstation, with a GTX 3090, 24 Gb of VRAM, and 64 Gb of RAM. Running a local Qwen 3.6 (dense), via Hermes agent.

So this is the setup, from an hardware point of view, but you need more.

What do you need to make it work

On top of some hardware where to make it run (I read that some folks managed to run small model on 8 Gb or less), I can testify it works on 24 GB of RAM.

Then, the next important bit: how to interact with the agent.

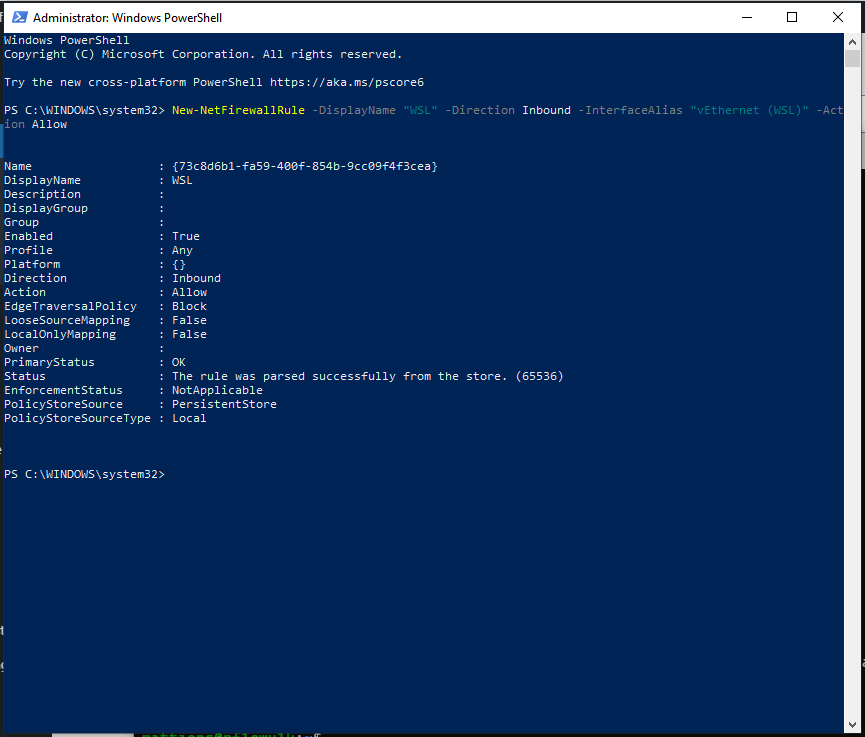

You need to give your agent a dedicated identity. I’m not talking about the SOUL.md and other stuff like that, I’m talking about permission. The great analogy here is that you are getting a new “collegue” which will need its own email, github username and so forth. This is the bit that makes it working.

Then, you need to know how to delegate. This is extremely important. If you give not clear direction, on what is the task, what are the goals, what success looks like and how do we measure it gets really hard.

You need to give the ability to assess if the results is good.

What can you do with it?

You can do lots of things.

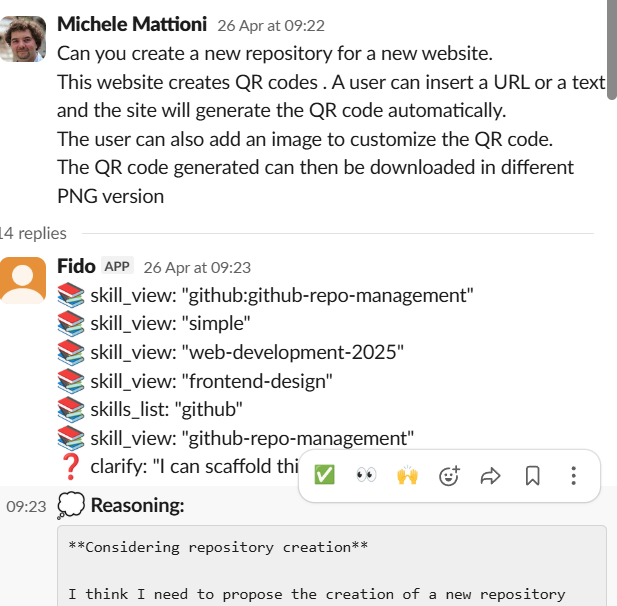

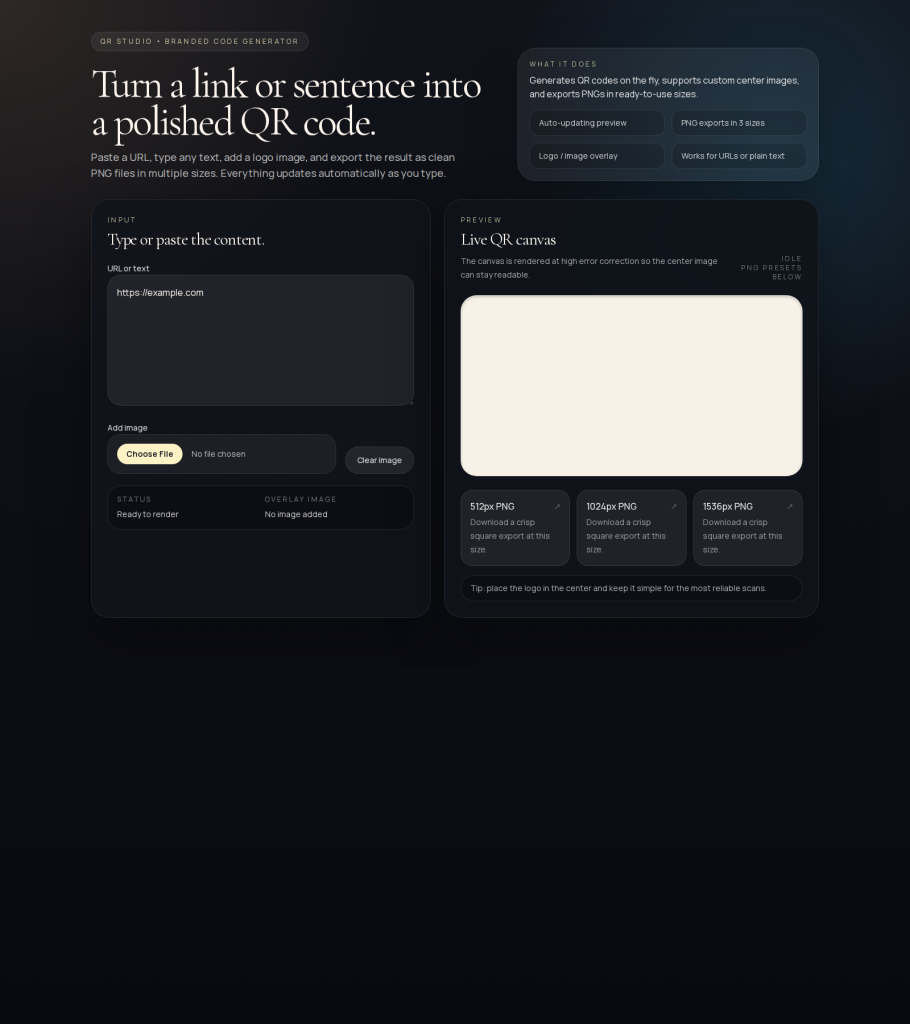

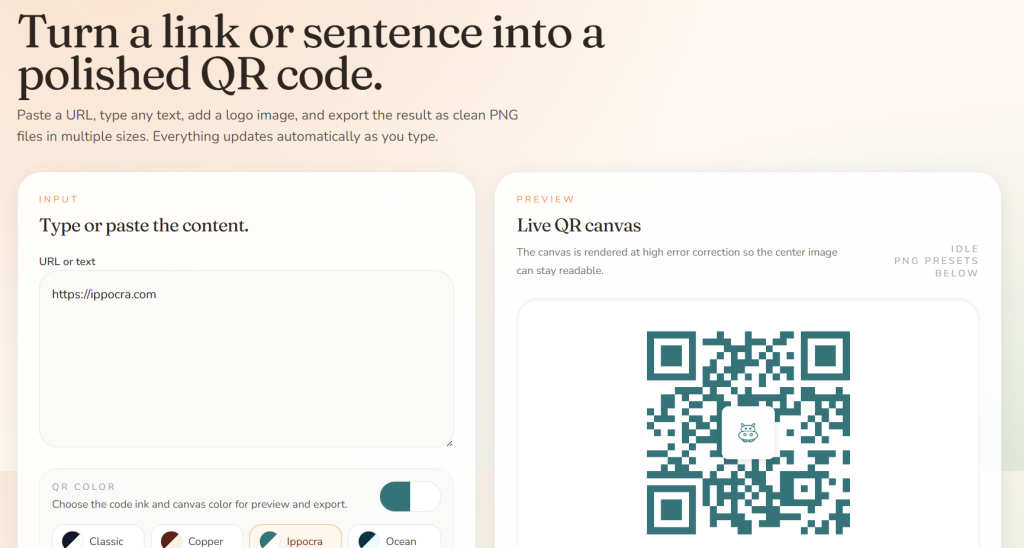

1. Completely new web application from scratch for QR codes

Qrcode application. I’ve created from scratch a QRcode application that is now hosted at https://qrcode.ippocra.com .

How this has been put together? With this simple message:

This is the first version

With a little bit of help and direction, this is the final version

2. Create instagram carousel for Ippocra

With Ippocra (https://ippocra.com) I wanted to see if we could create content for the Company instagram account.

This is the first attempt:

https://www.instagram.com/p/DXZE19bDPHG/

My second attempt was this one:

Here is the full carousel:

https://www.instagram.com/p/DXeMNlqjKwL

It took some new skills, a dedicated brand page on ippocra, and some work, but I’ve managed to get something like that working.

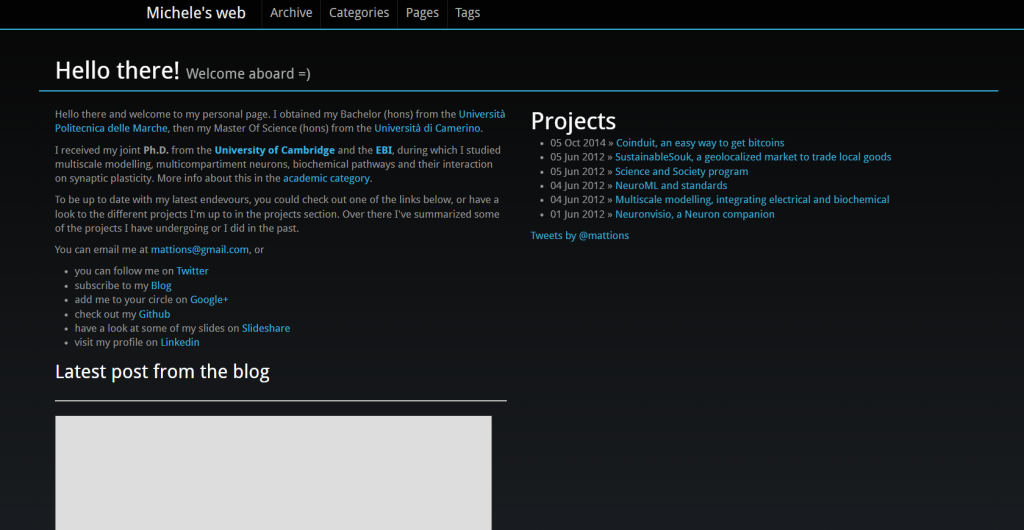

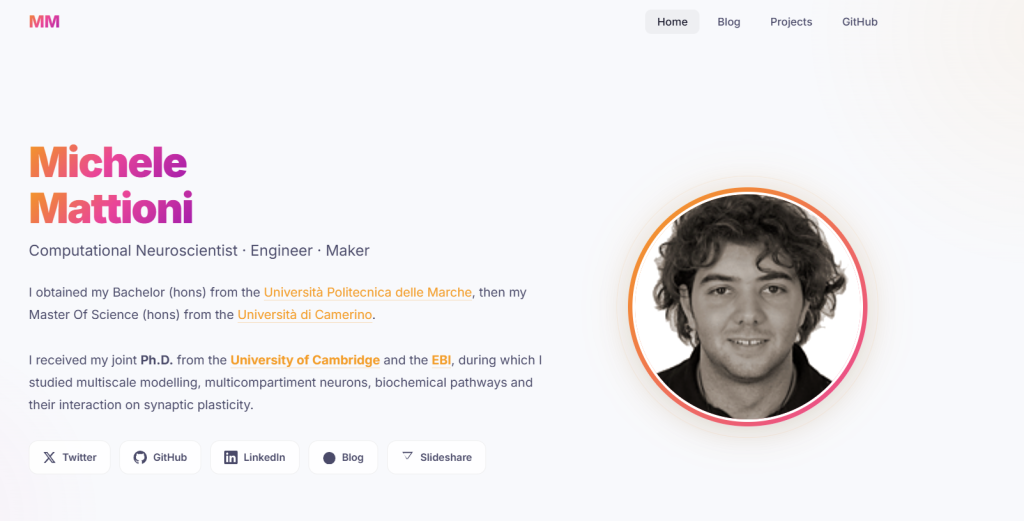

3. Rework my old personal website

I wanted to refresh my old personal website, given that the connection with the blog post and then one with twitter were broken. So I’ve asked my local Agent to help.

As you can see form this series of github PR:

- PR2 — did not like it: https://github.com/mattions/mattions.github.com/pull/2

- PR3 — a bit better, but not exactly what I was looking for: https://github.com/mattions/mattions.github.com/pull/3

- PR4 — now we are talking: https://github.com/mattions/mattions.github.com/pull/4

All this PR were created by hermes, using the local AI.

So we went from this:

to this:

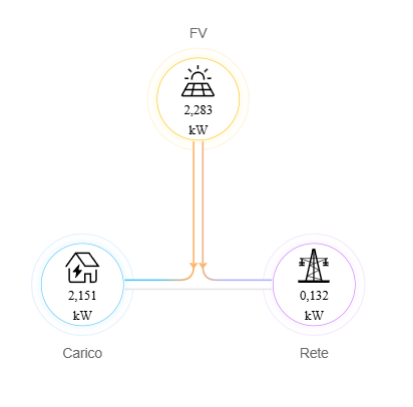

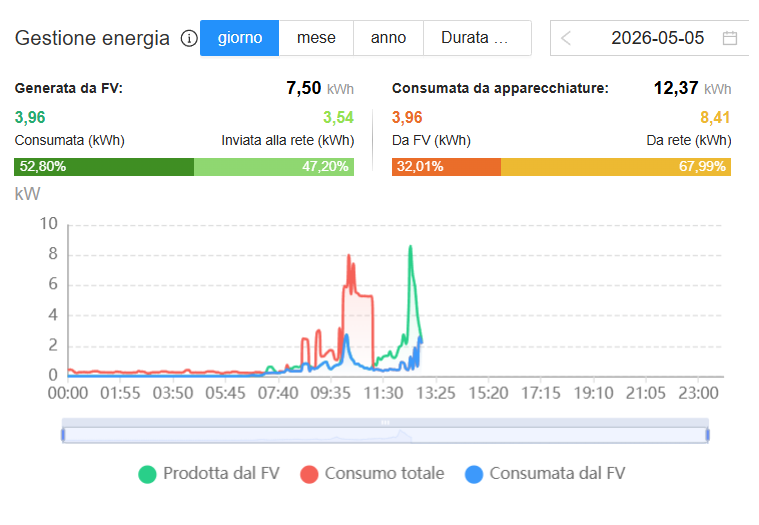

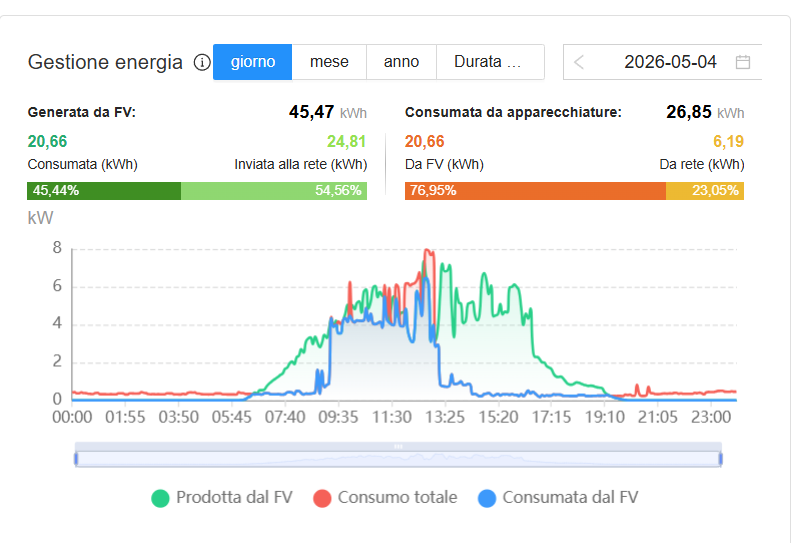

4. Energy management solution for solar panel + EV charging

I’ve got at home a solar panel installation and I’ve got an electric car.

My solar panel are able to output something like 7/8 kWh of power and the car can be tuned to absorbe 3/4/5 or more kWH.

The goal here is to make sure that what gets produced should first get used by the load of the house, and then the car.

Quick snapshot: instant production 2025/05/05

Today’s production graph

Yesterday’s production graph:

As you can see, the blue block is when the car is re-charging during the solar power generation, which however it depends a lot on the weather condition (more or less cloudy, more or less usage from the house).

This type of technology exists if you have the battery from the same brand. I’ve got a Huawei solar inverter and a Tesla Wall Charger with a Model 3, so I need to build my own.

I’ve got my local Hermes working on this, and, while it’s a work in progress, we already have the first part to read the power from the modebus.

Conclusion

I have some more example (sending emails, creating an application to track expenses, rework Ippocra marketing website) and so forth.

LocalAI is here and can work very well with the delegation approach. Give it a go.

Till next time.

Have fun!